-

Notifications

You must be signed in to change notification settings - Fork 20.2k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

trie: reduce allocations in stacktrie #30743

base: master

Are you sure you want to change the base?

Conversation

|

Update |

|

New progress |

f332426

to

4a2a72b

Compare

|

Ah wait 24B that is the size of a slice: a pointer and two ints. It is the slice passed by value, escaped to heap (correctly so). But how to avoid it?? |

|

Solved! |

aacb8e6

to

af2feef

Compare

258987d

to

b34643f

Compare

|

Did a sync on |

|

@holiman I think we should compare the relevant metrics (memory allocation, etc) during the snap sync and to see the overall impact brought by this change. |

| @@ -40,6 +40,20 @@ func (n *fullNode) encode(w rlp.EncoderBuffer) { | |||

| w.ListEnd(offset) | |||

| } | |||

|

|

|||

| func (n *fullnodeEncoder) encode(w rlp.EncoderBuffer) { | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Can you estimate the performance and allocation differences between using this encoder and the standard full node encoder?

The primary distinction seems to be that this encoder inlines the children encoding directly, rather than invoking the children encoder recursively. I’m curious about the performance implications of this approach.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Sure, so if I change it back (adding back rawNode, and then switching back the case branchNode: so that it looks like it did earlier, then the difference is:

[user@work go-ethereum]$ benchstat pr.bench pr.bench..2

goos: linux

goarch: amd64

pkg: github.com/ethereum/go-ethereum/trie

cpu: 12th Gen Intel(R) Core(TM) i7-1270P

│ pr.bench │ pr.bench..2 │

│ sec/op │ sec/op vs base │

Insert100K-8 88.67m ± 33% 88.20m ± 11% ~ (p=0.579 n=10)

│ pr.bench │ pr.bench..2 │

│ B/op │ B/op vs base │

Insert100K-8 3.451Ki ± 3% 2977.631Ki ± 0% +86178.83% (p=0.000 n=10)

│ pr.bench │ pr.bench..2 │

│ allocs/op │ allocs/op vs base │

Insert100K-8 22.00 ± 5% 126706.50 ± 0% +575838.64% (p=0.000 n=10)

When we build the children struct, all the values are copied, as opposed if we just use the encoder-type which just uses the same child without triggering a copy.

| n := shortNode{Key: hexToCompactInPlace(st.key), Val: valueNode(st.val)} | ||

|

|

||

| n.encode(t.h.encbuf) | ||

| { |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

We can also use shortNodeEncoder here?

I would recommend not to do the inline encoding directly.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

We could, but here we know we have a valueNode. And the valueNode just does:

func (n valueNode) encode(w rlp.EncoderBuffer) {

w.WriteBytes(n)

}

If we change that to a method which checks the size and theoretically does different things, then the semantics become slightly changed.

| //shortNodeEncoder is a type used exclusively for encoding. Briefly instantiating | ||

| // a shortNodeEncoder and initializing with existing slices is less memory | ||

| // intense than using the shortNode type. | ||

| shortNodeEncoder struct { |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

qq, does shortNodeEncoder need to implement the node interface?

It's just an encoder which coverts the node object to byte format?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

just have a try, it's technically possible

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

| @@ -27,6 +27,7 @@ import ( | |||

|

|

|||

| var ( | |||

| stPool = sync.Pool{New: func() any { return new(stNode) }} | |||

| bPool = newBytesPool(32, 100) | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

any particular reason to not use sync.Pool?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Yes! You'd be surprised, but the whole problem I had with extra alloc :

I solved that by not using a sync.Pool. I suspect it's due to the interface-conversion, but I don't know any deeper details about the why of it.

| @@ -129,6 +143,12 @@ const ( | |||

| ) | |||

|

|

|||

| func (n *stNode) reset() *stNode { | |||

| if n.typ == hashedNode { | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

What's the difference between HashedNode and Embedded tiny node?

For embedded node, we also allocate the buffer from the bPool and the buffer is owned by the node itself right?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Well, the thing is, that there's only one place in the stacktrie where we convert a node into a hashedNode.

st.typ = hashedNode

st.key = st.key[:0]

st.val = nil // Release reference to potentially externally held slice.This is the only place. So we know that once something has been turned into a hashedNode, the val is never externally held and thus it can be returned to the pool. The big problem we had earlier, is that we need to overwrite the val with a hash, but we are not allowed to mutate val. So this was a big cause of runaway allocs.

But now we reclaim those values-which-are-hashes since we know that they're "ours".

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

For embedded node, we also allocate the buffer from the bPool and the buffer is owned by the node itself right?

To answer your question: yes. So from the perspective of slice-reuse, there is zero difference.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Btw, in my follow-up PR to reduce allocs in derivesha:#30747 , I remove this trick again.

In that PR, I always copy the value as it enters the stacktrie. So we always own the val slice, and are free to reuse via pooling.

Doing so is less hacky, we get rid of this special case "val is externally held unless it's an hashedNode because then we own it".

That makes it possible for the derivesha-method to reuse the input-buffer

|

lint is read after the latest commit |

goos: linux

goarch: amd64

pkg: github.com/ethereum/go-ethereum/trie

cpu: 12th Gen Intel(R) Core(TM) i7-1270P

│ stacktrie.3 │ stacktrie.4 │

│ sec/op │ sec/op vs base │

Insert100K-8 69.50m ± 12% 74.59m ± 14% ~ (p=0.128 n=7)

│ stacktrie.3 │ stacktrie.4 │

│ B/op │ B/op vs base │

Insert100K-8 4.640Mi ± 0% 3.112Mi ± 0% -32.93% (p=0.001 n=7)

│ stacktrie.3 │ stacktrie.4 │

│ allocs/op │ allocs/op vs base │

Insert100K-8 226.7k ± 0% 126.7k ± 0% -44.11% (p=0.001 n=7)

fc0705f

to

bfc3aae

Compare

|

Running a snapsync benchmarkers now, using

|

|

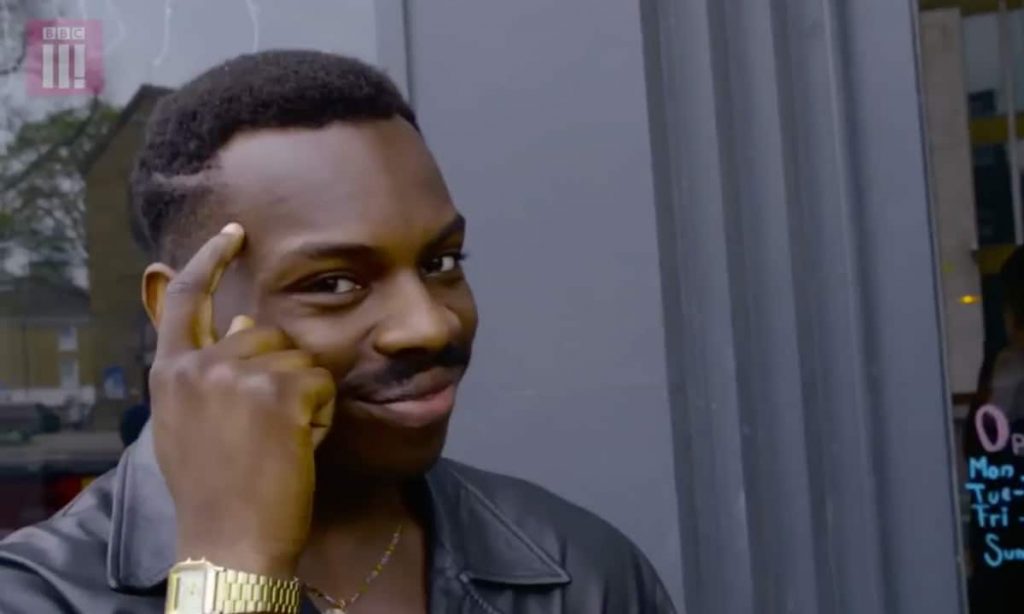

So, here are some charts. The first third of the charts is the snapsync, the latter two thirds are snap-gen + block by block sync. The yellow (this PR) finished slightly faster (13 minutes or so). This is despite that the The Memory charts doesn't show much, except that maybe the lead that |

This PR uses various tweaks and tricks to make the stacktrie near alloc-free.

Also improves derivesha: