-

Notifications

You must be signed in to change notification settings - Fork 575

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Support residual/skip connections #56

Comments

|

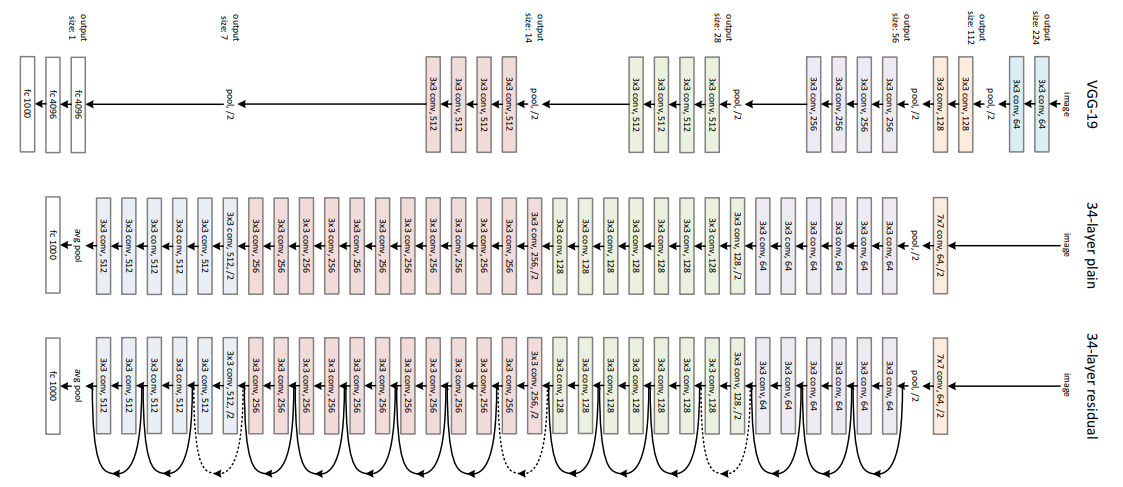

In general, I've been trying to avoid adding support for network layouts that are best specified by code, because tensorboard model graphs already exists. The only diagrams I've seen of resnets so far look like those that tensorboard would support: Doing some googling now, I saw this diagram which was neat: What did you have in mind? |

|

The second one sure does look very neat! When I originally wrote the post I had something like this in mind: Which is a very simplistic diagram of an EDSR-inspired network that I spun op on draw.io in a dozen minutes or so (spoiler, the architecture doesn't work). So not necessarily a very dense network like RDN, just a single skip connection would suffice. |

Not an issue, just a simple suggestion, could it be possible to introduce residual/skip connections in the form of extra directed arrows in the network graph? perhaps the skip connection could feed into a node where the aggregation operator can be specified in LaTeX (Addition, or any other function).

I understand that skip connections might be complext on the UX side since they kind of break the "linearity" of the list of layers, however, having them would allow a complete modeling of almost all possible architectures using CNNs, so I'd really appreciate the addition.

The text was updated successfully, but these errors were encountered: